When Your AI Agents Can't Work Together: Why Orchestration Is the Real Challenge

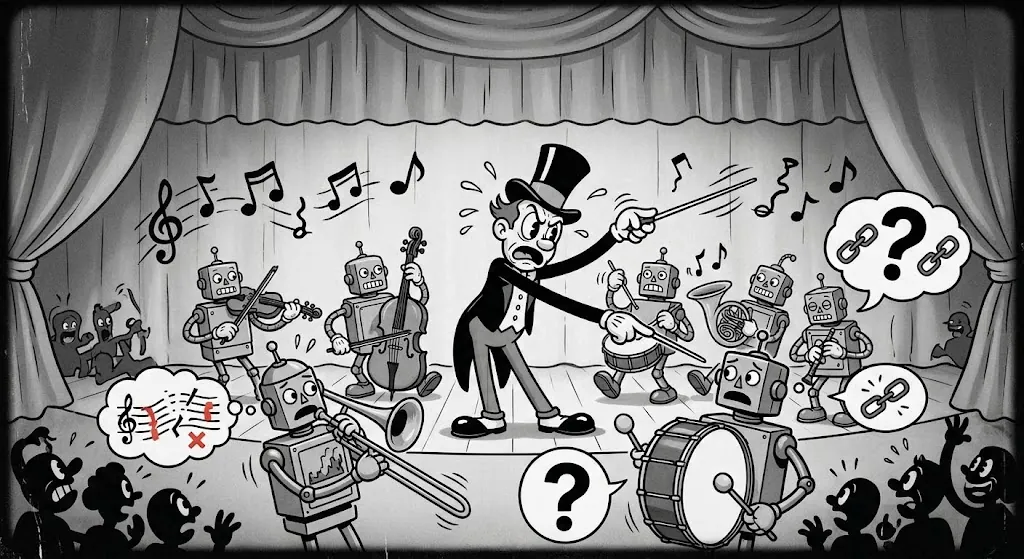

The first multi-agent AI system most teams build works surprisingly well in a demo. An orchestrator agent receives a request, breaks it into subtasks, hands each to a specialised agent, and synthesises a response. The architecture diagram makes sense. Then someone asks: what happens when one agent uses a different model provider than another? What happens when the workflow needs to call an agent managed by a different team, on a different platform?

In most production systems today, it breaks quietly and intermittently. The bottleneck in enterprise AI is no longer individual model quality. It is the absence of a coherent way to make agents work together reliably and at scale.

Why Orchestration Is Now the Hard Problem

Running a single AI model against a single prompt is straightforward. Running a procurement analyst agent that coordinates with a contract review agent, a supplier risk agent, and an ERP integration agent. Each potentially from a different vendor, is a fundamentally different challenge. You need agents to discover each other's capabilities. You need them to delegate tasks across boundaries without custom integration code for every pair. You need to control which steps use AI reasoning and which follow a fixed sequence. And you need the whole system to be observable when something fails.

None of that is solved by a better model. It is solved by how you orchestrate the system around them.

"The intelligence is no longer the constraint. The coordination is."

How AI Agent Orchestration Actually Works

Effective AI orchestration solves four problems in layers: how agents find and communicate with each other, how workflows are structured, how agents are governed, and how the system operates in production.

Agent Discovery and Communication

Before agents can work together, they need a way to advertise what they can do and negotiate how to exchange work. Two open standards are making this practical. The Model Context Protocol (MCP), released by Anthropic in November 2024 and now supported by OpenAI and Google, defines how an agent connects vertically to its tools, databases, APIs, external services. The Agent-to-Agent protocol (A2A), released by Google in April 2025 with more than 50 partner organisations, handles horizontal communication between agents: how they delegate tasks, pass context, and return results across vendor boundaries. Together they are the beginnings of a composable agent infrastructure that is not tied to a single vendor's stack.

A mature orchestration setup addresses both sides: how agents talk to each other and how they reach their tools. The organisations designing for MCP and A2A compliance today are building the same kind of interoperability advantage that cloud-agnostic architecture created in 2015.

Workflow Design: Knowing When to Think and When to Follow the Script

The most important decision in any multi-agent workflow is where to let AI reason dynamically and where to enforce a fixed sequence. Not every step should involve an LLM making judgement calls. Compliance checks, financial calculations, and data transformations should follow a predictable path every time. AI reasoning is best reserved for interpreting ambiguous inputs, synthesising information from multiple sources, or handling edge cases that no set of business rules can fully anticipate.

In practice, this means mixing workflow shapes: sometimes agents work in a chain, where one finishes before the next starts. Sometimes multiple agents tackle different parts of a problem at the same time. Sometimes a central coordinator decides which specialist to call based on the situation. The best orchestration systems make it easy to compose these patterns and be explicit about which steps are locked down and which involve AI judgement.

"The most important orchestration decision is not which model to use. It is where to allow reasoning and where to enforce predictability."

Governance and Security

When multiple agents coordinate across a workflow, security stops being a settings toggle and becomes a design decision. The simplest way to think about it: an agent should only be able to do what the person who triggered the workflow is allowed to do. If a marketing manager kicks off a reporting workflow, the agents in that chain should not have access to the finance team's write permissions just because someone gave the system broad access during setup. The same logic applies to code execution, agents that run calculations should do so in an isolated environment that cannot touch anything outside its scope.

These decisions are hard to bolt on later. If your organisation operates in a regulated space, designing for auditability and access control from day one is far cheaper than rearchitecting after the first incident.

Running Agents in Production

Building agents that work in a demo is different from running them reliably in production. Agents in production need to remember what happened earlier in a conversation, carry knowledge across sessions, and leave a clear trail when something goes wrong. When an agent produces an unexpected output, you need to trace exactly which steps were taken, what each agent received, and what it returned. Without that visibility, debugging agent failures is guesswork.

Coordination Is the Competitive Advantage

The organisations that will get durable value from multi-agent AI are not those with the best individual agents, they are those with the best coordination layer around them. Breaking complex tasks into specialised agents creates value. Orchestration infrastructure determines whether that value is captured or consumed by coordination overhead.

The parallel to microservices is instructive. Decomposing a monolith into services created real benefits but it also created a new class of coordination problems. The value was not unlocked by the decomposition itself, but by investing in the infrastructure that made decomposed systems manageable. Multi-agent AI is on the same trajectory.

"Every team building agents is building a distributed system. Very few are treating it like one."

Getting Orchestration Right from the Start

At iMSX, we design and build AI-orchestrated systems for enterprise clients, from multi-agent clinical workflows in healthcare to automated compliance pipelines in financial services. Our focus is on the orchestration layer itself: deciding where AI reasoning adds value, where predictability is required, how authority flows through the system, and what failure modes look like before they show up in production.

If your organisation is building or scaling multi-agent AI, the conversation that matters most is not about which model to use. It is about how you orchestrate the system around those models. That is where the leverage is, and we are happy to talk through what that looks like for your workflows.

Architecting AI for Your Organisation?

Our team designs multi-agent systems built for enterprise governance, security, and scale, not just demos.

Talk to Our Engineers